published on November 03, 2020 in news

Economics is not like other fields of science. Chemists and biologists have the luxury of being able to perform experiments in a laboratory and seeing if their theories are substantiated or not. Economists cannot perform experiments without potentially creating disastrous consequences leading to unemployment, market crashes, or recessions. So, how can we observe and test economic theories without the fear of a financial catastrophe? Why not simulate an open economy with real supply and demand, multiple currencies, and incentivize participants to produce and profit?

That is exactly what Prosperous Universe attempts to do, and we are going to take a look at what economic models can be observed and what can be learned from each identified theory.

Supply and Demand

Let’s start with an easy one: supply and demand. A normal market transaction takes place between two parties, the buyer and the seller. The price is dictated by supply and demand since a rational buyer will not pay more for a plentiful item that can be bought cheaper elsewhere. Likewise, the rational seller will not sell an item to a buyer for a cheaper price if others are willing to pay more for it. However, there are other variables such as income that play a role in determining if the transaction takes place or not. Demand will decline as the price increases along with the supply. If left alone, the system will move towards the market-clearing price, or equilibrium.

Perfect competition is a model that economists use to help illuminate several types of markets. In an ideal world, perfect competition arises when there are vast numbers of identical suppliers and people wanting to purchase the same item. There is no cost associated with finding a buyer or seller and also nothing that prevents anyone from entering the market. Those involved do not have the ability to affect prices and take the market price as certainty. Perfect competition produces an economy without excess. Suppliers will be happy to produce goods that can be sold for a price that is more than the cost of production, and buyers will continue to buy goods when their satisfaction from consuming is outweighed by the price of the good. New suppliers will come into the market if prices suddenly rise, which in turn would increase supply and drive the price back down. Suppliers that are not able to keep up with production when prices fall will have to abandon their trade.

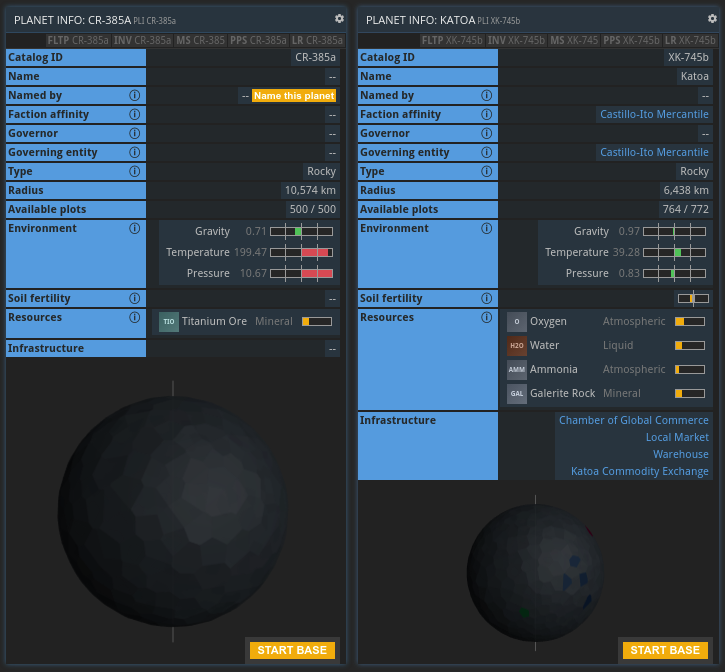

Perfect competition is something that can be observed in Prosperous Universe while the game simulates an open economy. All Players have access to the same toolset at the start of the game and can select their profession based on their own preferences. This represents a low barrier to entry, and anyone can become a farmer, metallurgist, chemist, etc. Players can also easily find a buyer or seller since everyone has access to the market exchange. Goods that have high demand like PSL (a plastic material) and copper but have low supply because of the time it takes to produce, encourage more players to go into the corresponding professions in order to satisfy the demand. Once the supply is up and the demand decreases, many players will choose to focus on different commodities to produce like electronic products that require complicated supply chains.

Behavioral Economics

Now we can get into comparative advantages. When a country or state is able to produce a certain good or service at a lower opportunity cost than others in its trading circle, then that country or state has a comparative advantage. In the famous example explained by David Ricardo, England and Portugal specialized their trading practices when discovering that Portugal could produce wine at a lower cost than England. On the other hand, England could produce cloth at a lower cost than Portugal. It was advantageous for Portugal to stop producing cloth and solely produce wine where it had an advantage. The same was true for England since it eventually stopped producing wine and focused cloth manufacturing.

We see the same kind of specialization taking place within Prosperous Universe. Players that opt to live on Promitor, one of the planets in the game with an atmosphere ideal for agriculture, will find themselves mainly producing food for the other players in the universe since they can do so more efficiently than others that live on harsh-climate planets. Montem, a planet rich in metals, mainly sees players go into the mining industry. Berthier, on the other hand, industrialized early and houses players that produce and sell specialized products that are needed by every player that wishes to build additional settlements.

Further examples of behavioral economics can be found in real-world comparisons as planets begin to mature and develop into nation-states. Promitor is often compared to Tsarist Russia in the 19-20th century where agriculture was its main resource of trade. While other countries like Britain were undergoing industrialization, Russia continued to be the largest exporter of crops like wheat. The refusal to embrace modern developments came at a cost for Russia, which was seen in the Russo-Japanese War. The Russians were unable to defeat the Japanese, spurring them to industrialize as a result, in which they started with their military. Promitor similarly continued to produce agricultural products at the expense of importing costly industrialized materials, causing the planet to lose considerable wealth. This forced residents of Promitor to quickly industrialize and produce their own building materials without the need for importing.

Interestingly enough, Promitor players were so enthusiastic about industrializing that most of the farmers became manufacturers. This caused problems for the planet since food, an essential commodity, became scarce and almost resulted in famine. This gives rise to another economics topic called Tragedy of the Commons. The theory states that in a shared-resource economy, individuals will act in their own self-interests and disregard the common good for the total population, and instead of thriving, resources will become spoiled or depleted. For this reason, many players form corporations where they work together toward a common goal where all can benefit. Many times, these corporations are powerful enough to bail out others on different planets when times get hard or enact their own devious schemes to take over entire industries or control certain commodities.

Another interesting economic development that transpired in Prosperous Universe was the colonization of the planet Ironforge. Ironforge is a remote planet with a climate similar to Antarctica, meaning that it was practically uninhabitable. However, Ironforge was rich in iron and limestone, which were in extreme demand throughout the galaxy. This caught the eyes of a particular company that sought to harvest these resources. The members created a complex strategy where each player had a specific role to fulfill such as only mining iron or only mining limestone. Others would produce higher level materials from iron and limestone that were running short across the universe. They also agreed to sell commodities at cost to one another so that each player benefitted from the project and no one was working for free. The colonization of the planet was only possible because of the coordination and trust that these players placed in one another and the payoff was immense.

Keynesian Economics and Government Intervention

When countries go through recessions and depression, we usually see governments spend more money to ease the burden on the economy. This type of economic practice is tied to Keynesian economics where governments intervene during the short run of an economic cycle. Keynesian economics runs in contrast to classical economic theories where economies were left alone to eventually stabilize after a period of depression. In Prosperous Universe, advanced players that run successful in-game companies can be elected as governors of particular planets. As a governor, players can increase or decrease the tax rate for the residents of their planets much like a government can do in the real world. When certain essential commodities become scarce, governors can rally players to intervene before circumstances become even more dire.

One such example of this kind of intervention was on the planet called Montem, a highly industrialized planet but lacking in agricultural resources. As a result of an influx of new players into the game, the price for food was incredibly high leaving agricultural planets like Promitor with the sole responsibility to feed the entire universe. Promitor and others could not keep up with the demand and therefore left a shortage of food supply and created a famine on Montem who had largely ignored the need for incentivizing farming. The governor of Montem stepped in to provide players with farming supplies in order to stimulate and expedite the farming industry on a starving planet. After the famine subsided, residents of Montem were able to buy food supplies at a lower cost than they had previously since they were not totally dependent upon Promitor’s shipments.

“Market Makers" were introduced into the game so that essential commodities like food, water, and uniform gear could be regulated and not be totally susceptible to supply and demand. This would ensure that the economy never stalls completely and that players could always survive with basic resources. This ties into the “nudge theory” of economics. Nudges are subtle ways that governments decide what should be produced and how much of it should be made. Examples of this can be seen in the medical field where materials are very expensive and results uncertain. Governments can offer to buy fixed quantities at fixed prices so that companies can invest in production. This works the same way in Prosperous Universe where Market Makers ease the burden of certain materials so that players can concentrate on producing more advanced technology.

When nudge strategies do not provide the desired result, governments may opt for a price fixing approach to stabilize the economy. Franklin Roosevelt famously forced businesses to fix prices in the 1930s during the Great Depression. Similarly, Prosperous Universe governors have found ways to fix prices on certain commodities like coffee and aluminum. These particular commodities are both in high demand but are not essential to survivability. In order to fix the price of these commodities, governors ordered their companies to produce an exorbitant amount of each commodity and started selling them at low prices so that many needy players could afford to buy them. Although this strategy seems irrational for rich governors to supply others with cheap materials out of the goodness of their hearts, it can be noted that when a group of price conscious players suddenly flock to one source of supply, it creates a monopoly, and these governors certainly benefited from this “altruistic” strategy.

Prosperous Universe continues to grow and expand daily as new players come and contribute to its expanding economy. As new political agendas begin to emerge and new business strategies uncovered, it begs the question of what new economic theories will we see demonstrated in the future. Will players continue to trust their partners and governors, or will a revolution begin that throws the economy into chaos? Anything is possible in the quest for intergalactic power and wealth.